Many developers are probably familiar with code coverage tools such as Clover or Cobertura. These tools help to measure the amount of source code that has been executed by a test suite. It gives a good overview of what areas of the source code have been tested and where tests have been neglected. This information is important in order to understand where additional tests should be added. However, code coverage does not indicate whether the tests are actually effective in finding bugs.

What is Mutation Testing?

Mutation testing provides information about the effectiveness of a test suite. It works in the following way:

- Mutants are created from the original source code, and they contain various faults. A mutant might contain, for example, a negated branch condition or a modified constant. The generated mutant might produce exactly the same output as the original program, in which case the mutant is said to be an equivalent mutant. These mutants are excluded from mutation testing because there is no way that the test suite can detect these kind of mutants. An example of an equivalent mutant is found in snippet 1.

- A set of tests from the suite are executed against the mutants. If the tests fail when executed against a mutant, the mutant is said to be killed (which is good in this context). Otherwise the mutant has lived.

- When tests have been executed against all the mutants, a mutation score is calculated, which is the ratio between killed mutants and the number of all non-equivalent mutants. A higher score indicates a better test suite effectiveness.

https://gist.github.com/sebastianmonte/6b5228653fa1cd5278a0#file-equivalent_mutant-java

Snippet 1: Equivalent mutant [3]

Simple Mutation Testing Example with Java

A sample project can be found at https://github.com/sebastianmonte/test-mutations that contains the source code of the following example. The project uses a tool called PIT [1], which is responsible for creating the mutants and executing the tests against them.

Consider a simple class that contains a method that checks if a number is a nonnegative:

https://gist.github.com/sebastianmonte/f6f79b64c02a9344d561#file-numberhelper-java

With the appropriate tests:

https://gist.github.com/sebastianmonte/c94b150b4edbeb740868#file-numberhelpertest-java

The test coverage tools would happily report that coverage is 100% and that the source code is throughout tested. But what happens when a fault is inserted in the code?

https://gist.github.com/sebastianmonte/e09661a8d940a333115f#file-numberhelpermutate-java

Our tests would still pass, but there is clearly a logical error that goes unnoticed. Agreed, this is a simplification and we could easily add a boundary test case for the zero input. However, consider a larger program that has thousands of comparisons and operations, how can we be sure that the tests are effective enough to spot these kinds of errors?

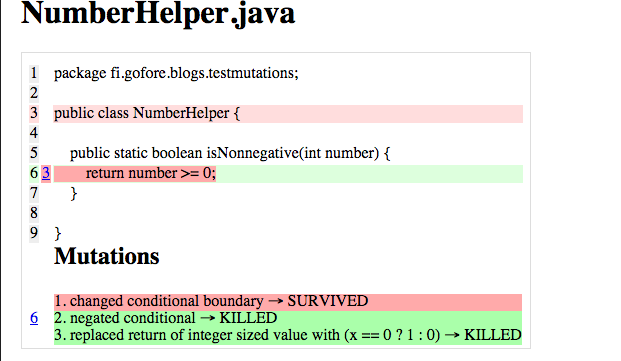

When the test suite is run with PIT, the problem is easily spotted. An example of the PIT test report is seen in Figure 1.

Figure 1: PIT test report

Figure 1: PIT test report

The UI is not the most polished one, but it indicates clearly which lines of code have inadequate test cases. Our mutation lives when the comparison is changed from ”>=” to ”>” indicating that we are not testing the boundary case. By adding the following test case we can get all the mutations killed:

https://gist.github.com/sebastianmonte/60e7a3830b4a50a11f57#file-numberhelpertest-java

Conclusion

Mutation testing seems powerful and research indicates that mutation score is a better predictor of real fault detection rate than code coverage [2]. However, it has not yet received widespread popularity. As one might guess, creating mutations and executing tests against those mutations is not a lightweight process and can take quite a lot of time. However, at least PIT provides a way to limit the source code classes for which to create mutants for, so mutants could only be applied in areas where a lot of conditional logic is present.

In addition to finding bugs, mutation testing is a great way to find redundant code thus making your code cleaner!

In conclusion I think mutation testing is a good addition to code coverage. I believe mutation testing will get more attention in the future once the performance cost is reduced and more tools begin to emerge for developers to use.

[1] http://pitest.org/

[2] http://homes.cs.washington.edu/~rjust/publ/mutants_real_faults_fse_2014.pdf

[3] S. Nica, R. Ramler, and F. Wotawa, ”Is mutation testing scalable for real-world software projects? ,” in Proceedings of the Third International Conference on Advances in System Testing and Validation Lifecycle (VALID 2011), November 2011.