Our customer AimoPark’s earlier infrastructure was mostly hosted in AWS Elastic Beanstalk. At that point, the version control platform was GitHub & CircleCI was used to offer some CI/CD steps, though some of them were still manual.

AimoPark had decided that they needed to renew their infrastructure quite and lot. We opted to get rid of the Elastic Beanstalk entirely and going for containerized microservices with Kubernetes on EKS for the container orchestration. We would be developing both new microservices & migrating the older applications to Kubernetes as well. They also had a need for a modern deployment pipeline for all their new Kubernetes apps.

Starting phases

We initially left the old AWS accounts with the Elastic Beanstalk envs as they were and made new ones for the new infrastructure. The older AWS accounts would eventually be cleaned & removed after we’d be done with migrations.

We built the new infra mostly using Terraform (and a complementary tool called Terragrunt), with the bot users & roles used to run Terraform created with CloudFormation StackSets to escape some chicken & egg problems. From the ground up we wanted to run Terraform only from CI/CD as well just like any other code. Ended up initializing a couple of infra-specific repos on GitHub & building first iterations of the pipelines with Circle CI.

At the same time we’d been looking for artifact store solutions. We ended up going with GitLab.com because it could provide us with version control platform, artifact store & CI/CD platform (among others) all in the same SaaS product. Migrated all the repos from GitHub to GitLab & started work on the new GitLab CI pipelines, loosely based on the CircleCI pipelines that we’d had before. They’ve gone through a bunch of iterations but the GitLab CI pipelines are what we’re still using.

Defining the pipelines

We’ve broken our pipelines down to some common components & dedicated a repository for them. For instance we’ve got a pipeline for building Docker images and pushing them to AWS ECR and another for Kubernetes deployments. We’ve also got pipelines for deploying infra-as-code with Terraform & couple of other pipelines as well. These separate pipelines can be mixed & matched with each other to a point and modified with app-specific custom jobs as well.

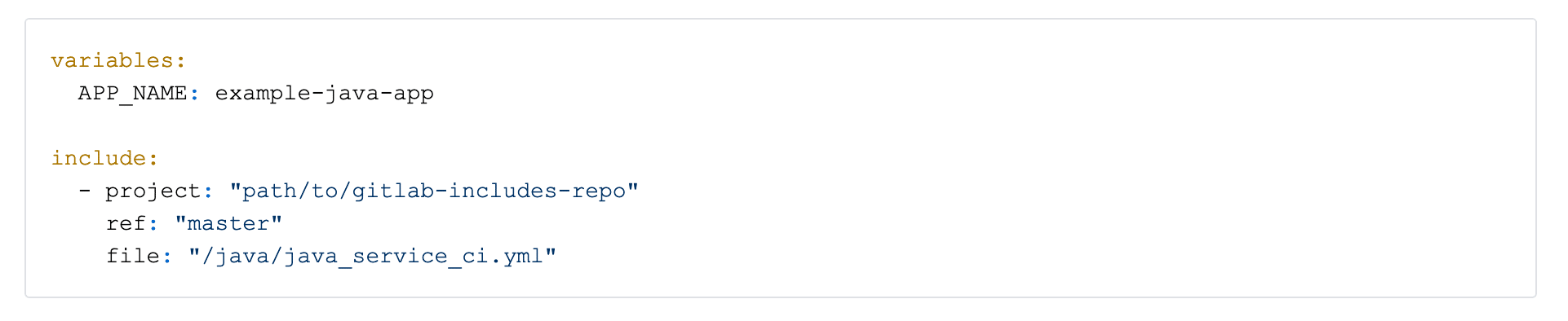

In the end this allows us to have very brief GitLab CI definitions in application repos. For example for Java services we can just have something like this example belowo and it’ll do all the necessary basic things. The referenced java_service_ci.yml uses both the ECR- & kubectl-pipelines, in addition to having some common Java-specific things like Maven stuff & SonarCloud code analysis.

The workflow

So here’s how the most common workflow for us works. Developers always work in feature-branches that are eventually merged to the main branch. When developer has pushed some commits & opens a Merge Request in GitLab the pipeline automatically starts working.

First it builds things like the final Kubernetes definition .yamls (with all the Deployments, Services etc. resources) using a tool called kustomize. With it we can have things like base configurations that are the same for all envs and overlay configurations that can be used to further add or modify things depending on the env. After the .yamls are built we run some validations against them with kubectl & some static code analysis with kube-score to improve security & resilience.

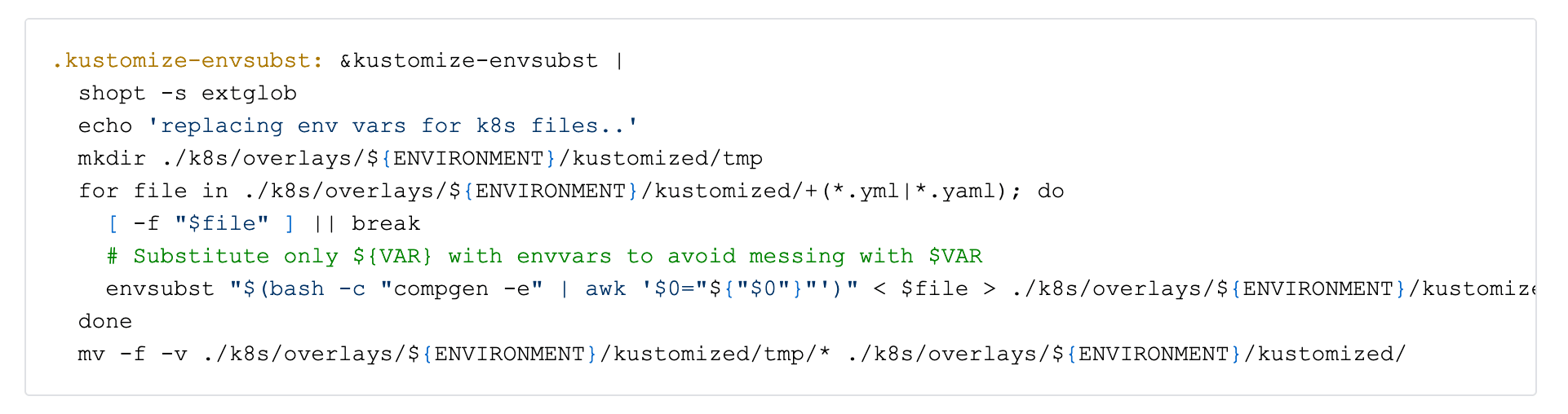

For getting environmental variables in the .ymls we had to do some envsubst trickery:

When those jobs pass the pipeline continues & builds the Docker image & pushes it to a registry hosted on Amazon ECR, tagged as a review-image. ECR does image vulnerability scanning on push.

To connect to the EKS cluster & other AWS services the pipeline uses a separate CICD-user with IAM role assuming & limited access rights. Early on we made some AWS Lambda functions to automatically rotate the CI/CD access keys very often for additional security.

Review environments

The pipeline then leverages a GitLab CI feature called Review App and creates a new namespace appended with the Merge Request id on our EKS dev cluster. The full app is deployed using an open-source tool Krane. It uses kubectl under the hood, makes sure that the deployment is successful and prints the relevant events & container logs if the job is failed. Naturally, with new pushes, the full deployment to the review namespace is repeated.

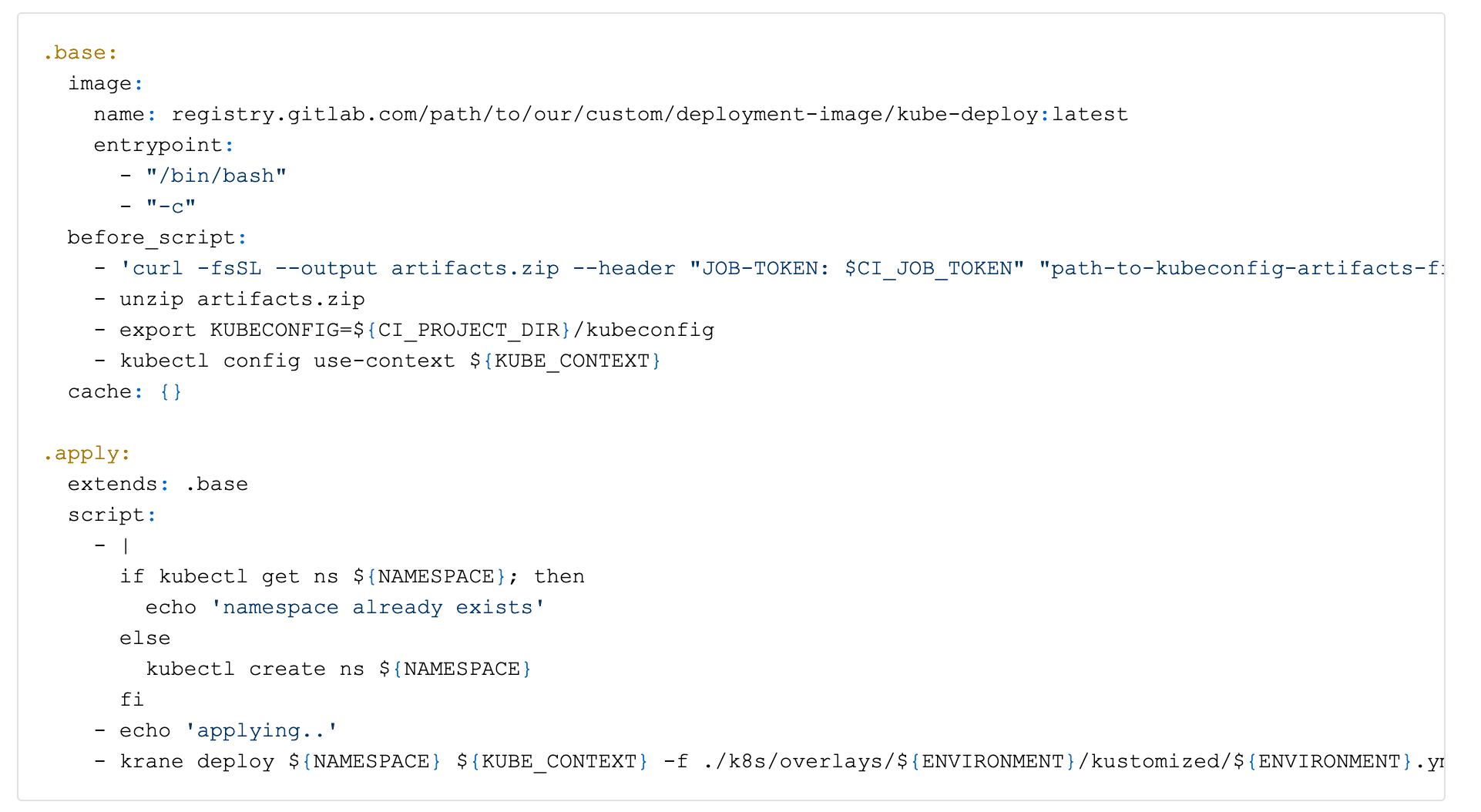

The deployment jobs themselves are pretty simple:

Developers can then test the app against all the resources on dev environment before merging. After merging or closing the MR the review namespace & all resources in it are mercilessly destroyed by the pipeline.

When merged another pipeline is started and it does much the same things as before, but this time deploys the app to the main namespace on the EKS dev cluster. Additional jobs are used to deploy the app to EKS clusters on staging & production accounts and these can behind manual approval steps depending on the app.

Final thoughts

Our development teams have ownership over their microservices and are responsible for doing all the deployments & maintaining the Kubernetes configurations etc. by themselves.

For debugging purposes, developers have edit rights to the development cluster, but for other clusters access any edit (& thus deployment) rights are limited to just the CICD and a small number of our AWS admins.

As things stand all of our EKS deployments are done through the CICDs. It has worked mostly very well for us, though sometimes it’s hard to tell which versions have been deployed to which env or coordinate deployments for multiple services. In the future, we might be interested in looking into some GitOps deployment tools like ArgoCD or Flux.